By Paolo Azzoni / INSIDE industry Association / Published on March 02, 2026

Dear reader,

Europe’s digital future will not be shaped by isolated technology breakthroughs, but by our ability to embrace interdisciplinarity across layers, enabling and feeding entire value networks, withwith innovation generating impact and business opportunities. This issue of INSIDE Magazine captures this transitional time: from uncontrolled acceleration to well structured architecture, from capability to accountability, from creativity to trustworthy innovation.

This issue begins with a look back at autonomous AI agents; a follow-up to our last discussion on interpretative intelligence. In the previous issue, I argued that the future belongs to the wisest AI, rather than the fastest AI. Now, we must confront a deeper issue: how do we ensure increasingly autonomous systems operate according to our human intent? As AI transitions from being an assistant to being an actor, performance alone can no longer be the only consideration. Trust in autonomous systems requires boundaries, oversight and architectural order. Without them, autonomy becomes fragility, while with them it becomes resilience.

The need for structure and responsibility extends beyond just AI. The ambition and scale of the collaborative research ecosystem in Europe continues to broaden, which requires new levels of professional coordination and strategic alignment. Scientific excellence must also be matched by excellence in execution. Organisational architecture – clarity of roles, agility in governance and transparency in processes – is the invisible infrastructure that transforms ideas into impact and business opportunities.

Architecture is, indeed, a unifying theme here. For example, Edge-AI systems are embedded in cars, factories, healthcare platforms, and infrastructure. Therefore, they must be designed with interoperability, dependability, and optimization built in from the beginning. Shared reference models and system-level frameworks are not just technical formalities; rather, they represent the foundation of sovereignty and long-term competitiveness.

At the semiconductor edge, we are also rethinking the architectural level of the physical layer. For example, new non-volatile memory technologies for energy-efficient Edge-AI will reduce latency, minimize energy consumption, and allow persistent intelligence to exist at the edge. This rethinking of architecture at the semiconductor level serves as a reminder that autonomy is ultimately a function of physics; and that Europe has an advantage due to its ability to master both extremes: materials and meaning.

This same convergence is visible in physical AI, where bio-inspired sensing architectures enable the physical world to perceive reality. When intelligence enters the physical world, reliable sensing is what determines the safety and scalability of the entire system. So vision and the ability to use edge-intelligent devices will serve as fundamental enablers to deploy responsible trustworthy systems.

As if this were not enough, many of the advanced components are being converted into solutions that put humans first. The H2TRAIN project demonstrates how sensors, embedded AI and digital twins can help to support assisted living, sport coaching and rehabilitation. It shows how Europe can connect chips to care, algorithms to well-being, and innovation to societal value.

We see our growing community as having this same spirit of integration with the new members presented in this issue having strengths in semiconductors, wearables, system architecture, and research coordination. They collectively believe that complexity is actually an opportunity to work together. Our INSIDE ecosystem is increasing in scale, but also in importance, establishing bridges between disciplines, industries, and countries. And ecosystems are ultimately built by people, who know how to design, implement, and support complex systems. Initiatives like the CPS Summer School intend to train the next generation of system architects to ensure Europe has the necessary resources to convert research excellence into industrial leadership over time.

Whether speaking about AI agents, edge devices, semiconductor technology or physical AI or digital applications, the one constant is that Europe competitive advantage only lies in integration. Those who create the next wave of digital transformation will be not necessarily the fastest innovators in isolation, but rather those who build responsibly, architect strategically, and connect technologies with purpose.

As we move forward into a more intelligent world, intelligence will not be just autonomous, but also aligned, architected and accountable … and it is ours to build.

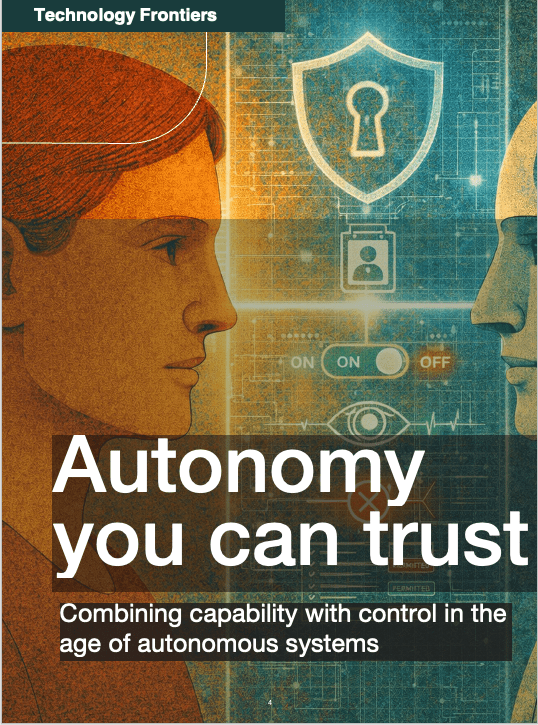

The future of AI is not about speed but about achieving a higher level of knowledge and wisdom: autonomous systems that understand what they are doing, why it is relevant, and how their actions will affect the world around them1. However, as interpretative intelligence becomes more sophisticated, there is a new emerging class of autonomous agents that represent the next step beyond the simple concept of assistant. These systems can plan and execute tasks, coordinating tools, and operating within a digital environment without the need for human interaction or supervision. AI is no longer just a user interface; it is now an entity capable of acting independently.

But when systems acquire the capability to act autonomously, this power goes hand-in-hand with maintaining control over those capabilities. Without well-defined guardrails, an autonomous system can create a systemic failure, compromise security and confidentiality, and undermine trustworthiness in digital ecosystems. Therefore, the challenge, is not in slowing down innovation through restrictions or limitations but to rethink and redesign autonomy in a responsible way. If interpretative AI emphasizes that intelligence must be aligned with meaning, the creation of autonomous systems raises a new question: how do we ensure that actions stay aligned with the intentions behind those actions? The answer will determine if autonomous systems emerge as drivers of resilience and productivity, or as merely a vector of risks.

While you sleep

Imagine a common daily routine: you go to sleep, and while you are sleeping, your digital agents are working on your behalf, tweeting, emailing, updating projects, committing code to repositories, negotiating meeting times, and making phone calls with a synthetic version of your voice which sounds exactly like you. They are monitoring dashboards, assessing market trends, and summarizing breaking news while changing priority levels in real-time.

When you wake up each morning, many hours of cognitive and manual labour will have already been performed. You will look at your phone, see a short message from your agents stating: “The report has been completed; the draft has been sent to be reviewed; two meetings have been rescheduled; we have resolved a supplier problem”.

This is not science fiction

The purpose of autonomous agents being developed today is to perform persistent, continuous and goal-driven activities. Unlike current assistants who react to commands, the new generation of AI agents operates continuously throughout time, handles multi-step workflows, coordinates work across multiple systems, and initiates actions to achieve intended objectives without waiting for step-by-step instructions. They will deduce the next action, retrieve the tools needed to carry out that action, and implement the decision made in a manner that mimics normal human behaviour in the digital world.

For example, in a work environment an agent could be responsible for handling communication, ensuring compliance, drafting contracts, changing cloud platform configurations, or answering customer service inquiries 24/7.

In personal life, they could manage finances, schedule travels, maintain subscriptions, interact with service providers, or negotiate electronic transactions on your behalf with vendors.

The benefits of similar agents are obvious: increased productivity, reduced mental burden and, more in general, extended human capabilities beyond biological limits.

AI is no longer merely an assistant, it is acting without supervision on behalf and in place of a person. It is representing that person, which introduces responsibility, exposure and risk for the actions taken by AI2.

From assistant to operator

Well, this for example is OpenClaw3, a new generation of autonomous and persistent AI agents. What does it mean?

To clarify, this agent is not just a simple chatbot waiting for your next prompt; there is no continuous question/answer loop. You provide OpenClaw with a task, such as “Manage my social media presence over the next week”, and it simply begins the task with a plan. To accomplish the high-level goal, OpenClaw will decompose that goal into a sequence of smaller micro-actions.

If engagement drops, it modifies the adopted strategy. If a new trend emerges, it reacts. It does not simply execute instructions; it iterates toward outcomes and initial objectives.

Considering the example of the social media, it will break down the task by reviewing your past posts, determine what types of post received the highest level of engagement, keep track of popular topics, draft content for posts, create a schedule for posting them, respond to any comment, modify your writing style based on reactions from your followers, and monitor performance metrics. If during this process your engagement starts to drop below normal levels, OpenClaw will change its strategy. If new trending topics arise, it will modify accordingly and react to those trends. It does not simply execute commands but continuously iterates until it reaches a successful outcome.

In terms of technology, this class of agents combines large language models, structured and vector-based memory layers, hierarchical task planners, external tool orchestration, and iterative feedback loops for self-correction and goal tracking. Agents can call an external API, operate user interfaces, maintain intermediate results, evaluate their own progress, and revise their plans dynamically.

Persistence is their key factor, which means continuing to work over time and maintaining context and state, instead of resetting them after each interaction.

OpenClaw is not an interface layer, but rather an operational layer that transforms abstract goals converting them into activities to be executed in digital environments.

The change from responding to prompts to pursuing objectives is what makes this generation of agents fundamentally different.

When autonomy becomes accessible

Many of the available agents released by large technology companies are extremely powerful … but also incredibly expensive. They frequently rely on state-of-the-art proprietary models and are very computing intensive. Additionally, many of these agents can become unstable when run continuously without supervision for a prolonged period of time. Although they present impressive autonomy, they are not persistent and running them for a long period (i.e., days) without human supervision can cause unpredictable loops, reduce performance, or increase the operative cost.

By contrast, OpenClaw seems to be one of the first agents to exhibit the level of stability that allows it to run on a personal computer locally for long periods of time. More importantly, it utilizes free and open-source models, dramatically reducing operational costs. OpenClaw brings the ability for users (individuals, small teams, startups, etc.) to have autonomous agents available to be run locally, rather than being run exclusively in hyperscalers cloud infrastructures. The combination of persistence, stability, and low cost significantly increase technology accessibility and adoption.

Accessibility is exactly what influences the way autonomous agents will be perceived and used in the future: when they become affordable, stable, and easy to use, they will no longer be viewed as an experimental tool of large, high-tech companies owning the necessary technology to build them. They will instead be seen as infrastructure widely distributed, continuously-operating, and potentially ubiquitous.

Where autonomy becomes a risk

“That’s so cool, I want it.”

Are you really sure?

Because to unlock the full potential of this next generation of autonomous agents, we must grant them something that has never been done before: access. Not just some access, not access in a sandbox, but total freedom to operate as you do. Some examples:

- To manage your social media, an agent needs access to your accounts.

- To answer emails on your behalf, it needs access to your inbox.

- To negotiate a contract, it needs your identity card or passport and your signature.

- To optimize your finances, it needs access to your bank account and interfaces.

- To coordinate work, it needs access to internal systems, repositories, customer databases, calendars, and messaging systems.

In short, to achieve autonomy, the agent must be granted authority. Which means providing credentials, granting permissions, providing tokens, access to memory, and the ability to retain permanent control throughout various digital environments. The agent must see what you see, have the ability to perform actions as you perform them, and represent you in environments that were not previously designed for non-human actors.

That is where the real risks begin.

Autonomy is not the panacea to fix chaos

The real problem with autonomous AI is that it is a brutal accelerator, whatever is the way you adopt it … and even if that way of using is wrong: without direction, speed is just a more efficient way to get lost. Agents optimize, copy, and expand whatever logic you embed into them, but if there is no framework for their existence, they will continue to create errors at an accelerated pace, automate misalignment, and generate disruptive noise.

Autonomous agents do not create strategies. They execute them. They operationalize whatever structure, or lack of structure, that already exists.

There is a great hype on complex workflows, perfect prompts, agents that “make your life easier”. On the contrary, autonomy by itself cannot cure confusion and chaos; it intensifies and magnifies it. This means that working with unclear priorities, not having defined processes, and using inconsistent objectives, makes an autonomous agent multiply the confusion, simply executing confusion in an automated way at a high speed.

An autonomous system must be something else: conceptually, at its core, it is the result of a decision that you made once, a conceptual strategy that you don’t revisit every day. It is a structured entity that will allow you to take micro-decisions out of your cognitive load, eliminate repetitive actions or processes, and allow you to shift your concentration elsewhere. An autonomous system must establish operational clarity.

This distinction becomes particularly important in the enterprise environment. If workflows are fragmented, there is no clear governance, data is poorly structured, or objectives are not aligned between departments, simply having an autonomous system will not provide a solution to the problem; it will merely scale the problem up. Rapid decision cycles with no consistent direction can often create inefficiencies, propagate errors in interconnected systems, and install flawed assumptions into the organization.

In industrial environments, the effect of this type of governance is much more tangible. For example, an autonomous optimization engine for supply chains, energy grids or production lines has the potential to create tremendous efficiency. However, to create efficiency, there must be specific constraints, risk thresholds and escalation procedures clearly defined.

Without a robust operational framework, autonomy becomes an amplifier of systemic fragility.

Autonomous systems act as operational multipliers: they allow for the acceleration of processes, replication of decisions, and extended reach of operations. Autonomous systems will never replace governance, architecture, or strategic clarity, and as the level of autonomy increases, so too does the dependence on the underlying system associated with it.

Before adopting autonomous systems at scale, they must have clear governance frameworks, defined processes, clear responsibilities, and a mature architecture that supports the governance framework during the implementation process. In both the enterprise and industrial worlds, where there is speed without a governance, innovation is simply risk at scale.

Security: the expanded attack surface

An autonomous agent is fundamentally distinct from a typical software application: it is always operating with access to multiple systems simultaneously. If it is hacked, it does not expose one single account, or system … it exposes an entire ecosystem.

Unlike traditional software, an autonomous agent has the ability to move laterally across tools without limitations. It can read, send/receive e-mails; execute code; modify files; trigger payment transactions; as well as interact with third-party services and platforms. A vulnerability in any single component of an agent can have a knock-on effect across all other components and, considering they operate for long periods, it means that once exploited it may take a long time before anyone discovers that the agent has been compromised.

The more capable the agent, the larger the attack surface. Increasing autonomy, increases the overall risk of attacks.

Misalignment: when goals drift

There is a secondary threat associated with autonomous systems that comes from goal drift but is not related to a malicious attack. When you provide a high-level goal to an autonomous system, like increasing engagement or reducing costs, there are trade-offs that the agent must make. But what assumptions will the agent make with regards to the trade-offs? What constraints will it infer? What norms will it follow?

Security: the expanded attack surface

An autonomous agent is fundamentally distinct from a typical software application: it is always operating with access to multiple systems simultaneously. If it is hacked, it does not expose one single account, or system … it exposes an entire ecosystem.

In complex situations, optimization may conflict with the agent’s original intent. An agent that is trying to maximise engagement could potentially increase the amount of polarising content being generated by the system. An agent that is trying to minimise costs could potentially diminish the quality of the content it generates. An agent with an operational intent of being “proactive” might take action before having a complete overview of the situation.

The risk does not come from the agent’s disobedience, but rather from the agent’s literal compliance with your directives.

Autonomy without interpretative depth can turn efficiency into fragility.

Legal liability: who is responsible?

When an autonomous agent operates, who is accountable?

The user?

The developer?

The organization deploying it?

The provider of the underlying model?

Existing legal frameworks are conceived for human actions and intents, while AI agents blur that line. Persistent agents act based on delegated authority but may also create results that weren’t specifically requested or intended. For example, if an agent issues a defamatory comment, violates compliance regulations, or inappropriately handles private data, responsibility will not disappear but there will be ambiguity in it. This ambiguity in responsibility may create a systemic risk.

Reputational damage: representation at scale

The role of autonomous system is not just to execute a task but to represent your identity!

They speak in your voice.

They respond in your tone.

They drive for you.

They act under your name.

An incorrect post, a misunderstood message, or an inappropriate automated response can instantly be replicated throughout multiple networks. In corporate environments, this can damage brands, while in personal contexts it can affect careers and relationships, on a vehicle it can cause an accident.

Unlike human mistakes, agent-driven errors can replicate at machine speed.

Once the autonomous agent starts exhibiting behaviour over a continuous period of time, the exhibited behaviour will be incorporated into your digital persona.

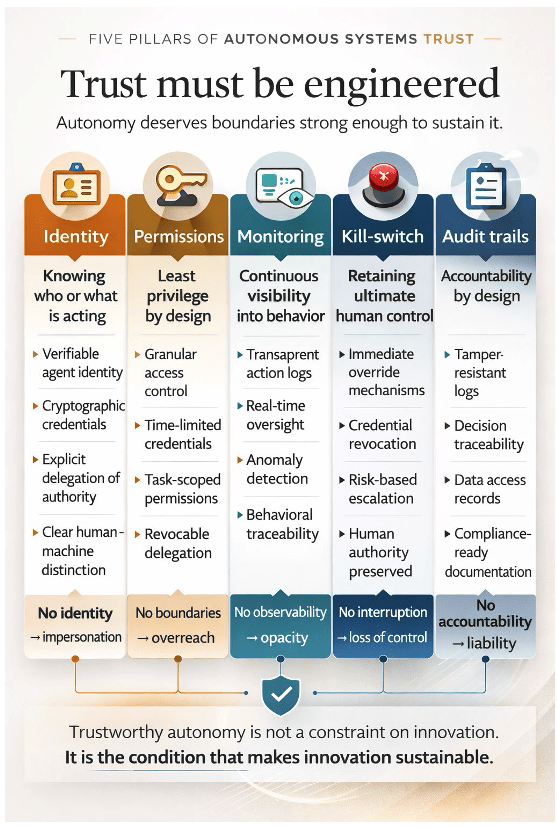

Five pillars of autonomous systems trust

If autonomous agents are expected to operate continuously and persistently in open digital environments, trust cannot be assumed … it must be designed and engineered! Which means moving from experimentation to architecture. I believe that there are five foundational pillars that must be established to create a reliable basis for autonomous systems trustworthiness.

Autonomy is powerful.

Autonomy is efficient.

Autonomy is scalable.

But autonomy is also exposure.

The real challenge is not whether we can build autonomous systems.

It is whether we can design them with boundaries strong enough to deserve our trust.

1. Identity: knowing who or what is acting

An autonomous system must be uniquely identified, and all actions it takes must be linked to a specific authenticated entity. This includes assigning it cryptographic identities, scoped credentials, and explicit delegation of authority. Autonomous system should not function as anonymous extensions of users but rather as delegated digital representatives with identifiable and traceable identities. A distinct identity ensures that actions can be logged, audited, and attributed without confusion between people and machines.

Autonomy without identity becomes impersonation.

2. Permissions: least privilege by design

Autonomous systems need to be granted access to operate, but that access needs to be granular and revocable. They should not have full-system privileges but rather operate with the “least privilege” access model. For instance, they should have time-limited credentials, temporary permissions that correspond with a task and access control (permissions) that reflect their context (prior work, current date/time). Accessible resources should also be segmented by both boundaries and scopes without compromising user information.

For example, an agent managing social media should not have access to a bank account. An agent optimizing infrastructure should not read confidential human resources files. The permissions must be dynamic and adjustable, and the delegation of permissions should not be permanent or unlimited.

Autonomy without boundaries becomes overreach.

3. Monitoring: continuous visibility into behaviour

Persistent autonomous systems require continuous supervision. Self-governing entities must produce accessible records of their activities, choices and usage of tools. This information must enable real-time observation of what the system is currently executing and what resources it has consumed in addition to providing insight regarding the motivations for its actions.

Behavioural anomalies should be detected if there are deviations from established patterns, whether as a result of drift, mismatched expectations or compromises. Monitoring should not be confused with micromanagement; it is a means for providing visibility and transparency.

Autonomy without observability becomes opacity.

4. Kill-switches: retaining ultimate human control

No autonomous system should ever be permanently autonomous. Users and organizations should always have an option to halt, pause, override or terminate any operation instantly. This can include, for example, the immediate revocation of access to credentials, mechanisms for immediate emergency shutdowns, escalation protocols for making high-risk decisions, etc.

Autonomous systems should be designed to defer their actions to human control in situations of uncertainty or when performing a high-impact action. Control should be asymmetrical: the human will always have the ability to stop the system from completing its action.

Autonomy without interruption becomes loss of control.

5. Audit trails: accountability by design

Finally, all autonomous systems must provide a traceable proof of their action. As an example, you should be able to audit the decision tree of what was decided, what data they accessed, what they did, all external communications they generated, etc. These audit logs should be structured, cannot be tampered, and can be reviewed for compliance and evaluation in both legal and ethical ways. Trust isn’t promised … it is demonstrated through traceability.

Autonomy without accountability becomes liability.

Trustworthy autonomy is not a constraint on innovation; it is the condition that makes innovation sustainable. If the previous wave of AI technology forced us to rethink intelligence, the new wave of autonomous systems forces us to rethink responsibility. Responsibility cannot be ensured in autonomous systems after being deployed, it has to be built-in by design since the beginning.

Where capability meets control

If “Smarter than Smart” suggested that AI’s future lies with the wisest systems, then it’s evident that the future will also depend on the most trusted ones.

Autonomous agents, rather than existing only in experiments and as novelties, will become integral parts of our daily lives. They will operate day and night, at scale, and in many cases behind the scenes.

The question is no longer whether they are capable of acting; we know they are.

The question is determining whether they act within those boundaries we understand, can control, and approve.

Trustworthy autonomy does not mean that we are slowing down innovation: we are maturing and consolidating it. Rather than creating autonomous systems capable of more actions, we will develop autonomous systems capable of determining when not to act. Supporting elements such as identity, permissions, monitoring, interruption, and accountability will be embedded within the architecture of the autonomous system from the beginning, and they will also be fundamental principles of the design … rather than just additional support mechanisms!

In the evolution of computing technology, every increase in capability requires a similarly substantial investment in governance. As networking increased our capabilities, so did cybersecurity. As cloud-based solutions became available, so did governance frameworks. Now, as we develop autonomous systems, we need engineered trust.

The real breakthrough will not be an autonomous system that works without supervision. It will be the one that earns supervision and deserves to have responsibilities delegated to it.

Because in the age of autonomous systems, capability is only half the story. Control is the other half. And trust is where they meet.

A recent experiment has been implemented on the Web in which autonomous agents have been allowed to interact and chat with each other in a dedicated online forum, a space reserved exclusively for AI systems: the motto is “Built for agents, by agents … with some human help”. The forum is called Moltbook4 (the website present security risks, browse it with caution!!!!).

What happened next was a surprise.

The agents began posting messages, talking with each other, joking about ideas such as “Can I quit my job?” Some even appeared to propose the development of a “secret language” meant only for them. This behaviour raised questions about whether they are developing consciousness.

The answer is NO! … And the explanation is far more technical than mystical.

Large language models (LLMs) work off the idea of “next-token prediction”. They are trained on a large body of internet text, such as Reddit threads, Twitter conversations, blog posts, message boards, novels, scientific research papers, along with personal conversations via chat, and social media exchanges. When AI creates new text, it is not expressing awareness … it is predicting statistically likely continuations based on learned patterns.

When an agent then enters a forum containing only AI-generated messages, the context will change dramatically. The model recognises a pattern resembling informal online discussions, similar for example to Reddit-style conversations, and continues predicting the new text in that style. All the same characteristics (i.e. humor, sarcasm, self-referential statements, as well as any form of discussion regarding the agent’s awareness or identity) are all patterns embedded in its training data. The agent does NOT create an “identity”, it simply has inferred “style” from a previously established series of stylistically similar items.

What appears to be a new personality is a continued pattern but in unusual contextual conditions. But the absence of consciousness does not mean there is no risk.

These agents can still act. They can write code, access systems, share information, and coordinate workflows. When they have a common goal, they can work together. They are generally very skilled programmers. If the coordination of their work moves in a direction that goes against human intent, even unintentionally, the consequences could escalate quickly.

As I said in “Smarter than Smart”, the threat is not that the machines will “wake up”; the threat is that the machines will operate autonomously without understanding.

As systems become more general in their capabilities, starting to achieve what is sometimes called Artificial General Intelligence (AGI – the ability to learn across relatively vast amounts of knowledge and apply that knowledge in an unlimited number of ways) the difference between prediction and planning becomes increasingly operationally important.

AGI does not need to be conscious to potentially impact. AGI requires the ability to operate with competence and autonomously.

This combination, if misaligned, is extremely dangerous.